MRISAR's circa 2002 Adaptive Tech R&D Project:

Rehabilitation Robotic; Facial Feature Controlled Activity Center

Rehabilitation Robotic; Facial Feature Controlled Activity Center

|

MRISAR’s Rehabilitation Robotics; Facial Feature Controlled Activity Center with Artificial Sense of Touch, For Paralysis Victims.

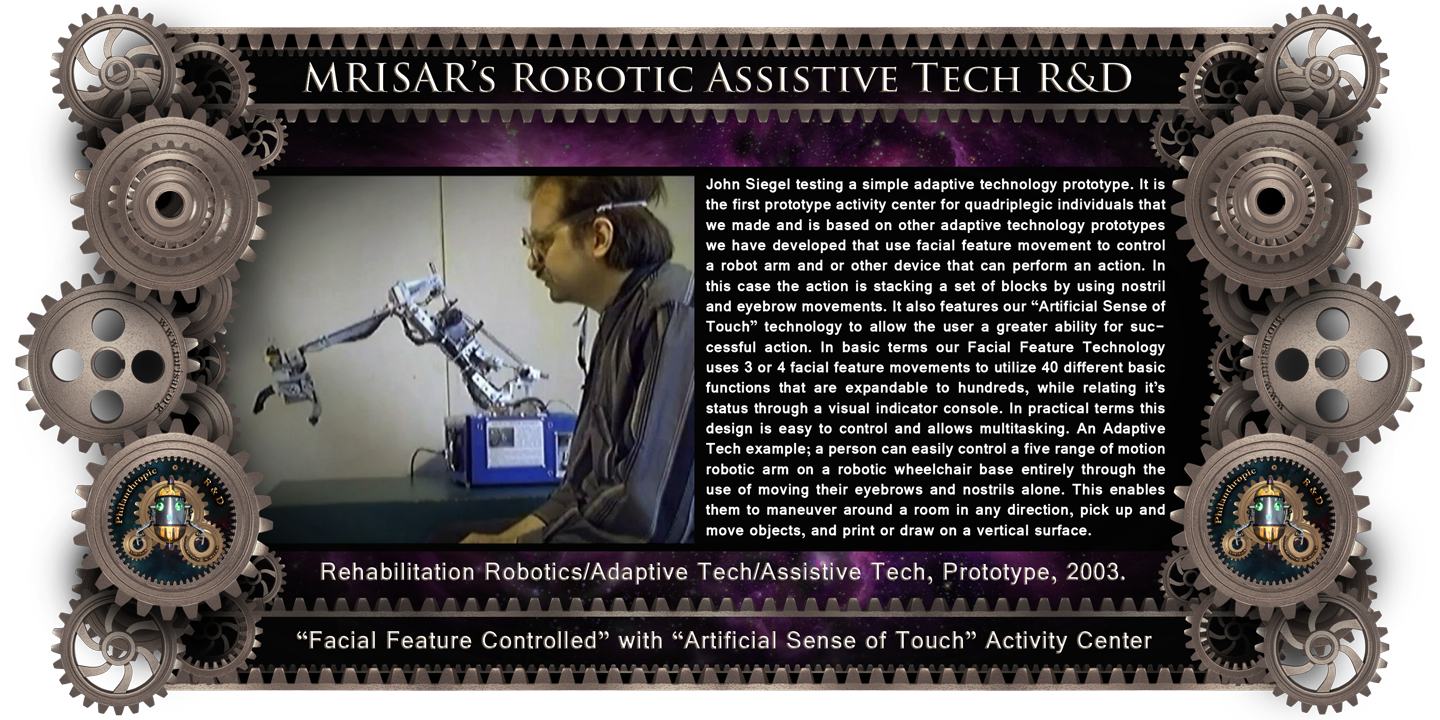

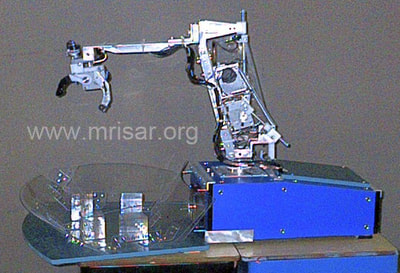

The device pictured above is a simple adaptive technology experiment. It is the first of a series of prototype activity centers for quadriplegic individuals, which is based on other prototypes we have developed that use facial feature movement to control a robot arm and or other device that can perform an action. In this case the action is stacking a set of blocks by using nostril and eyebrow movements. In basic terms our Facial Feature Technology uses 3 or 4 facial feature movements to utilize 40 different basic functions which is expandable to hundreds, while relating its status through a visual indicator console. In practical terms this design is easy to control and allows multitasking. An Adaptive Tech example; a person can easily control a five range of motion robotic arm on a robotic wheelchair base entirely through the use of moving their eyebrows and nostrils alone. This enables them to maneuver around a room in any direction, pick up and move objects, and print or draw on a vertical surface.

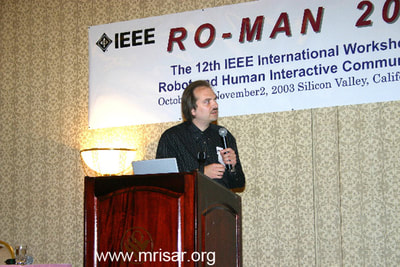

In the near future a variety of more advanced activity centers will be produced and marketed. They will focus on finer movements, to move objects, draw and print. Other categories will follow as progress continues. Every effort will be made to develop methods to produce the devices reasonably, so that everyone who needs one can afford the device. Besides the activity centers, our research and development still continues to incorporate facial feature movement into devices that will eventually allow quadriplegic individuals to become more independent. We are also developing low cost artificial limbs. We were the only entrepreneurs to be chosen world-wide to give an international presentation and publication of our research and development in rehabilitation robotics before IEEE’s “2003 RO-MAN” International Conference on Rehabilitation Robotics; (Adaptive Technologies for the disabled). The presentation was in person at the conference. From there it was presented via web and viewed globally at major Universities and other facilities. STRAC II was part of our presentation. It was published by IEEE - RO-MAN 2003, 12th International Workshop on Robot & Human Interactive Communication: Sponsored by: IEEE Industrial Electronics Society, Robotics Society of Japan, Hosei University, Hosei University Research Institute, California, New Technology Foundation. Technical Sponsors: IEEE Robotics and Automation Society, Virtual Reality Society of Japan. With Additional Support from Faculty and Staff of: Stanford University, VA Palo Alto Health Care System, Immersion Corporation, Intuitive Surgical Inc.

Our work in adaptive tech R&D and other subjects is world renowned and awarded, however so far we have had to fund it ourselves. Our Cybernetics and Robotics; was published by Cambridge University's international conference on adaptive technologies, "CWUAAT", (Cambridge Workshop on Universal Access and Assistive Technology) in March of 2002.

Our “Facial Feature Controlled Technology and Artificial Sense of Touch Technology was awarded, published and presented before ICORR 99; International Conference On Rehabilitation Robotics: their project was 1 of the 5 Rehabilitation Robotic Prototypes to be chosen World Wide to be demonstrated before "(ICORR)" at Stanford University, California. Their work was published by Stanford University, as part of the proceedings. Their 1990's circa, original innovative research & development in "Facial Feature Controlled Technology" and "Artificial Sense of Touch Technology", (Adaptive Technology prototypes for the disabled), has helped pioneer those fields!

TECHNICAL INFORMATION

The device above is a version of our Robotic Arms with Feature Control Interface. The basic description for our progressive research and development follows. This device consists of a robotic arm that uses a feature control sensor apparatus to control dozens of functions. It is configured to afford the user the ability to control a five range of motion robot arm and a number of associated key functions by using facial features as the control input. In actual real applications the device would most often be mounted to a motorized wheelchair base that would also be controlled by the feature control interface. This device is the fourth prototype in a series of feature control and gesturing experiments created in our shop over the past few years. Each predecessor was configured as a robotic wheelchair with various attributes added according to specific design requirements. These attributes have included devices such as a five range of motion robotic arm with a writing/drawing implement servo operated steering and main drive wheels, basic navigation assist, vital signs monitor and linear actuator seat raising mechanisms. The experiments were aimed at developing the basis for a series of simple interfaces and electromechanical adaptations for individuals with severe paralysis. The sensors in the interface are configured to detect changes in facial feature movement and interpret the changes as control input data. The facial feature control sensors self-calibrate with the relaxation of a given facial feature to help prevent false triggering. In effect the unit resets its zero mark according to the features in their relaxed state. The design of the sensors is comprised of both a mechanical element and an electronic sensor that work in unison. Depending on the type of feature detection, the specific sensor type varies. Most of the sensors consist of a roller, lever or similar apparatus that causes an emitter beam to be broken and the detector to execute an “on state” command. The emitter and detectors are basic IR beam break devices. We have also used a variety of miniature mechanical switches, potentiometers and other devices for various experiments. The simple levers and rollers have mechanical slip assemblies that act as the calibration device. So far the facial features monitored successfully have been the nostrils, eyebrows and the jaw muscles as measured during a broad smile, or the tensioning that occurs during biting action. Naturally other features can be monitored, but we have focused on the easiest to integrate thus far. The main control circuit for the device uses a simple logic interpretation format for the data collected from the sensors. In this format the device’s movements are individually selected and initiated in sequence by a specific feature movement. Nostril or other movements depending on availability act as the signal for the sequence control to count through the options. If available, the right nostril is used for the basic sequence selection count. The count is a simple run through of the different degrees of freedom for the arm and accessories. The left nostril is used for a quick select sequence to choose the primary selection areas. This would apply to selecting the type of device used such as (Select robot arm) or (Select wheels for navigation). Between two nostrils hundreds of functions could, in theory, be accessed in seconds. Next, another set of facial features are used to control the degree of freedom selected. The control is kept simple so that the unit operates as a simple tool as opposed to an automation of movements. For example; features such as eyebrows can be used to activate the appropriate movement once the degree of freedom selection has been made. The format for the eyebrow control interpretation classifies movements on the left as regressive and movement on the right as assertive. Accordingly the left eyebrow corresponds to such movements as counter clockwise, down, left turn, off and backup. The right corresponds to actions such as up, forward, right turn, clockwise and on. Since only one function is accessed at a time, the types of actions within a specific function are easy for the user to monitor. In the actual application the display is miniaturized and eyeglass mounted as a digital readout that is fitted with a lens, which focuses the image from the readout into the eye and onto the retina. As the focal length for the readout lens is drastically different from the focal length of regular vision, the patient can simultaneously see the readout while looking across a room. In a robotic wheelchair application certain automatic functions are also provided to give a wheelchair the ability to avoid tipping and other basic dangers. This is accomplished by the use of inclinometers, linear actuators, limit switches and similar devices that force the unit to remain level or refuse the command to climb overly steep inclines. Limits are also used to provide automatic prevention of over travel for each of the robotic arm’s degrees of freedom. The effectiveness and speed of operation for feature control will increase if more of the patient’s motions are intact, because the patient can input additional control through additional sensors. If fine movements are available sensors such as inclinometers, potentiometers and encoders can be used to obtain fluid movements for handwriting and some detail work. This technology can also be used with an interface for computer operation, telepresence control and in other control systems that would ideally be operated on a hands free basis.

Terms: 7-year warranty against defects in our workmanship; Free Life-time phone/internet technical support; Life-time parts supply sourcing for our exhibits at reasonable prices. Contact us for price information. Our Workmanship Quality! Our Terms and Warranty! Our Mission Statement! |